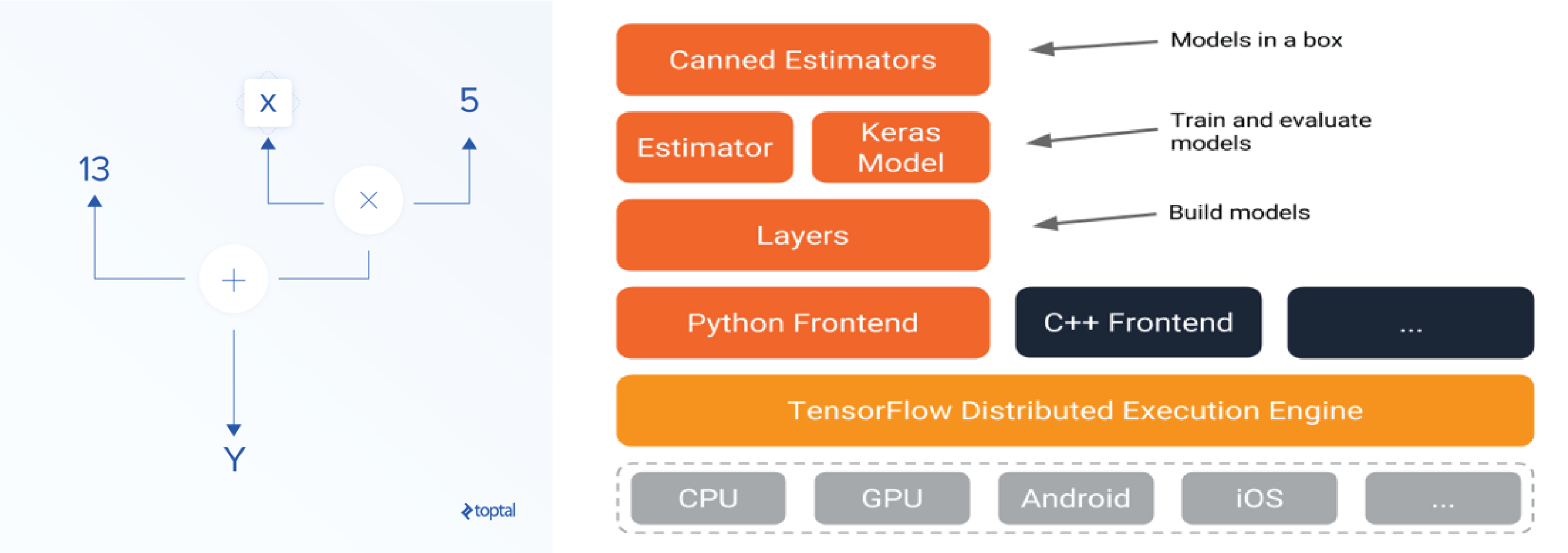

For instance, a graph can be transformed into another one in which noise is added after all the operations of a specific type. By default, a transformation is a simple copy but it can be customized to achieved other goals. transforming is a global operation consisting in transforming a graph into another.For example, rerouting can be used to insert an operation adding noise in place of an existing tensor. Operations (the nodes) are not modified by this operation. rerouting is a local operation consisting in re-plugging existing tensors (the edges of the graph).The Graph Editor library is an attempt to allow for other kinds of editing operations, namely, rerouting and transforming. Library overviewĪppending new nodes is the only graph editing operation allowed by the TensorFlow core library. The author's github username is purpledog. Truncated_probablity = np.float64(np.The TensorFlow Graph Editor library allows for modification of an existing tf.Graph instance in-place. # Or you can print out all of the results mapping labels to probabilities. Highest_probability_index = np.argmax(predictions) The results of running the image tensor through the model will then need to be mapped back to the labels. Print ("Verify this a model exported from an Object Detection project.") Print ("Couldn't find classification output layer: " + output_layer + ".") Prob_tensor = _tensor_by_name(output_layer) # These names are part of the model and cannot be changed. Once the image is prepared as a tensor, we can send it through the model for a prediction.

If orientation = 1 or orientation = 2 or orientation = 5 or orientation = 6: If orientation = 2 or orientation = 3 or orientation = 6 or orientation = 7:

# orientation is 1 based, shift to zero based and flip/transpose based on 0-based values Orientation = exif.get(exif_orientation_tag, 1) If (exif != None and exif_orientation_tag in exif): Return cv2.resize(image, (256, 256), interpolation = cv2.INTER_LINEAR) Return cv2.resize(image, new_size, interpolation = cv2.INTER_LINEAR) # RGB -> BGR conversion is performed as well. The steps above use the following helper functions: def convert_to_opencv(image): # Crop the center for the specified network_input_SizeĪugmented_image = crop_center(augmented_image, network_input_size, network_input_size) Input_tensor_shape = _tensor_by_name('Placeholder:0').shape.as_list() Resize down to 256x256 # Resize that square down to 256x256Īugmented_image = resize_to_256_square(max_square_image)Ĭrop the center for the specific input size for the model # Get the input size of the model Max_square_image = crop_center(image, min_dim, min_dim) Image = resize_down_to_1600_max_dim(image)Ĭrop the largest center square # We next get the largest center square # aspect ratio such that the largest dimension is 1600 Handle images with a dimension >1600 # If the image has either w or h greater than 1600 we resize it down respecting # Update orientation based on EXIF tags, if the file has orientation info. Open the file and create an image in the BGR color space from PIL import Image These steps mimic the image manipulation performed during training. There are a few steps you need to take to prepare the image for prediction. With tf.io.gfile.GFile(filename, 'rb') as f: # These are set to the default names from exported models, update as needed. Add the following code to a new Python script. The first step is to load the model into your project. These files represent the trained model and the classification labels.

zip file contains a model.pb and a labels.txt file. Next, you'll need to install the following packages: pip install tensorflow

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed